- Sql Server Database Performance Tuning

- Sql Server Automatic Tuning

- Azure Sql Auto Tuning

- Azure Sql Database Automatic Tuning

- Azure Sql Managed Instance Auto Tuning

- Azure Sql Data Warehouse Performance Tuning

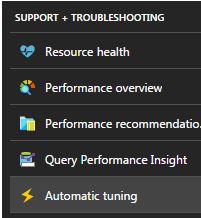

May 16, 2016 Azure SQL Database makes performance tuning and troubleshooting easier and faster than ever before, helping you to deliver great performance for your database applications while saving you time and effort in the process. SQL Database provides its users with: Tailor-made performance tuning recommendations based on historical database usage. A Hyperscale database is an Azure SQL database in the Hyperscale service tier that is backed by the Hyperscale scale-out storage technology. A Hyperscale database supports up to 100 TB of data and provides high throughput and performance, as well as rapid scaling to. Sep 06, 2016 Improved Automatic Tuning boosts your Azure SQL Database performance. Azure SQL Database is the world’s first intelligent database service that learns and adapts with your application, enabling you to dynamically maximize performance with very little effort on your part. The functionality isn't actually auto-tuning. What happens is that Azure SQL DB will use any missing index recommendations and try applying those indexes and monitors the outcome to see if it has made improvements. You should not rely on this to create all your indexes for you - it isn't that smart yet. Aug 28, 2018 Log in to the Azure Portal. Go to your SQL Database and click on it. On the menu to the left Choose Automatic Tuning. Here you can toggle on and off each option separately.

-->Once you have identified a performance issue that you are facing with SQL Database, this article is designed to help you:

- Tune your application and apply some best practices that can improve performance.

- Tune the database by changing indexes and queries to more efficiently work with data.

This article assumes that you have already worked through the Azure SQL Database database advisor recommendations and the Azure SQL Database auto-tuning recommendations. It also assumes that you have reviewed An overview of monitoring and tuning and its related articles related to troubleshooting performance issues. Additionally, this article assumes that you do not have a CPU resources, running-related performance issue that can be resolved by increasing the compute size or service tier to provide more resources to your database.

Tune your application

In traditional on-premises SQL Server, the process of initial capacity planning often is separated from the process of running an application in production. Hardware and product licenses are purchased first, and performance tuning is done afterward. When you use Azure SQL Database, it's a good idea to interweave the process of running an application and tuning it. With the model of paying for capacity on demand, you can tune your application to use the minimum resources needed now, instead of over-provisioning on hardware based on guesses of future growth plans for an application, which often are incorrect. Some customers might choose not to tune an application, and instead choose to over-provision hardware resources. This approach might be a good idea if you don't want to change a key application during a busy period. But, tuning an application can minimize resource requirements and lower monthly bills when you use the service tiers in Azure SQL Database.

Application characteristics

Although Azure SQL Database service tiers are designed to improve performance stability and predictability for an application, some best practices can help you tune your application to better take advantage of the resources at a compute size. Although many applications have significant performance gains simply by switching to a higher compute size or service tier, some applications need additional tuning to benefit from a higher level of service. For increased performance, consider additional application tuning for applications that have these characteristics:

- Applications that have slow performance because of 'chatty' behaviorChatty applications make excessive data access operations that are sensitive to network latency. You might need to modify these kinds of applications to reduce the number of data access operations to the SQL database. For example, you might improve application performance by using techniques like batching ad hoc queries or moving the queries to stored procedures. For more information, see Batch queries.

- Databases with an intensive workload that can't be supported by an entire single machineDatabases that exceed the resources of the highest Premium compute size might benefit from scaling out the workload. For more information, see Cross-database sharding and Functional partitioning.

- Applications that have sub-optimal queriesApplications, especially those in the data access layer, that have poorly tuned queries might not benefit from a higher compute size. This includes queries that lack a WHERE clause, have missing indexes, or have outdated statistics. These applications benefit from standard query performance-tuning techniques. For more information, see Missing indexes and Query tuning and hinting.

- Applications that have sub-optimal data access designApplications that have inherent data access concurrency issues, for example deadlocking, might not benefit from a higher compute size. Consider reducing round trips against the Azure SQL Database by caching data on the client side with the Azure Caching service or another caching technology. See Application tier caching.

Tune your database

In this section, we look at some techniques that you can use to tune Azure SQL Database to gain the best performance for your application and run it at the lowest possible compute size. Some of these techniques match traditional SQL Server tuning best practices, but others are specific to Azure SQL Database. In some cases, you can examine the consumed resources for a database to find areas to further tune and extend traditional SQL Server techniques to work in Azure SQL Database.

Identifying and adding missing indexes

A common problem in OLTP database performance relates to the physical database design. Often, database schemas are designed and shipped without testing at scale (either in load or in data volume). Unfortunately, the performance of a query plan might be acceptable on a small scale but degrade substantially under production-level data volumes. The most common source of this issue is the lack of appropriate indexes to satisfy filters or other restrictions in a query. Often, missing indexes manifests as a table scan when an index seek could suffice.

In this example, the selected query plan uses a scan when a seek would suffice:

Azure SQL Database can help you find and fix common missing index conditions. DMVs that are built into Azure SQL Database look at query compilations in which an index would significantly reduce the estimated cost to run a query. During query execution, SQL Database tracks how often each query plan is executed, and tracks the estimated gap between the executing query plan and the imagined one where that index existed. You can use these DMVs to quickly guess which changes to your physical database design might improve overall workload cost for a database and its real workload.

You can use this query to evaluate potential missing indexes:

In this example, the query resulted in this suggestion:

After it's created, that same SELECT statement picks a different plan, which uses a seek instead of a scan, and then executes the plan more efficiently:

The key insight is that the IO capacity of a shared, commodity system is more limited than that of a dedicated server machine. There's a premium on minimizing unnecessary IO to take maximum advantage of the system in the DTU of each compute size of the Azure SQL Database service tiers. Appropriate physical database design choices can significantly improve the latency for individual queries, improve the throughput of concurrent requests handled per scale unit, and minimize the costs required to satisfy the query. For more information about the missing index DMVs, see sys.dm_db_missing_index_details.

Query tuning and hinting

The query optimizer in Azure SQL Database is similar to the traditional SQL Server query optimizer. Most of the best practices for tuning queries and understanding the reasoning model limitations for the query optimizer also apply to Azure SQL Database. If you tune queries in Azure SQL Database, you might get the additional benefit of reducing aggregate resource demands. Your application might be able to run at a lower cost than an un-tuned equivalent because it can run at a lower compute size.

An example that is common in SQL Server and which also applies to Azure SQL Database is how the query optimizer 'sniffs' parameters. During compilation, the query optimizer evaluates the current value of a parameter to determine whether it can generate a more optimal query plan. Although this strategy often can lead to a query plan that is significantly faster than a plan compiled without known parameter values, currently it works imperfectly both in SQL Server and in Azure SQL Database. Sometimes the parameter is not sniffed, and sometimes the parameter is sniffed but the generated plan is sub-optimal for the full set of parameter values in a workload. Microsoft includes query hints (directives) so that you can specify intent more deliberately and override the default behavior of parameter sniffing. Often, if you use hints, you can fix cases in which the default SQL Server or Azure SQL Database behavior is imperfect for a specific customer workload.

The next example demonstrates how the query processor can generate a plan that is sub-optimal both for performance and resource requirements. This example also shows that if you use a query hint, you can reduce query run time and resource requirements for your SQL database:

The setup code creates a table that has skewed data distribution. The optimal query plan differs based on which parameter is selected. Unfortunately, the plan caching behavior doesn't always recompile the query based on the most common parameter value. So, it's possible for a sub-optimal plan to be cached and used for many values, even when a different plan might be a better plan choice on average. Then the query plan creates two stored procedures that are identical, except that one has a special query hint.

We recommend that you wait at least 10 minutes before you begin part 2 of the example, so that the results are distinct in the resulting telemetry data.

Each part of this example attempts to run a parameterized insert statement 1,000 times (to generate a sufficient load to use as a test data set). When it executes stored procedures, the query processor examines the parameter value that is passed to the procedure during its first compilation (parameter 'sniffing'). The processor caches the resulting plan and uses it for later invocations, even if the parameter value is different. The optimal plan might not be used in all cases. Sometimes you need to guide the optimizer to pick a plan that is better for the average case rather than the specific case from when the query was first compiled. In this example, the initial plan generates a 'scan' plan that reads all rows to find each value that matches the parameter:

Because we executed the procedure by using the value 1, the resulting plan was optimal for the value 1 but was sub-optimal for all other values in the table. The result likely isn't what you would want if you were to pick each plan randomly, because the plan performs more slowly and uses more resources.

If you run the test with

SET STATISTICS IO set to ON, the logical scan work in this example is done behind the scenes. You can see that there are 1,148 reads done by the plan (which is inefficient, if the average case is to return just one row):The second part of the example uses a query hint to tell the optimizer to use a specific value during the compilation process. In this case, it forces the query processor to ignore the value that is passed as the parameter, and instead to assume

UNKNOWN. This refers to a value that has the average frequency in the table (ignoring skew). The resulting plan is a seek-based plan that is faster and uses fewer resources, on average, than the plan in part 1 of this example:You can see the effect in the sys.resource_stats table (there is a delay from the time that you execute the test and when the data populates the table). For this example, part 1 executed during the 22:25:00 time window, and part 2 executed at 22:35:00. The earlier time window used more resources in that time window than the later one (because of plan efficiency improvements).

Note

Although the volume in this example is intentionally small, the effect of sub-optimal parameters can be substantial, especially on larger databases. The difference, in extreme cases, can be between seconds for fast cases and hours for slow cases.

You can examine sys.resource_stats to determine whether the resource for a test uses more or fewer resources than another test. When you compare data, separate the timing of tests so that they are not in the same 5-minute window in the sys.resource_stats view. The goal of the exercise is to minimize the total amount of resources used, and not to minimize the peak resources. Generally, optimizing a piece of code for latency also reduces resource consumption. Make sure that the changes you make to an application are necessary, and that the changes don't negatively affect the customer experience for someone who might be using query hints in the application.

If a workload has a set of repeating queries, often it makes sense to capture and validate the optimality of your plan choices because it drives the minimum resource size unit required to host the database. After you validate it, occasionally reexamine the plans to help you make sure that they have not degraded. You can learn more about query hints (Transact-SQL).

Very large database architectures

Before the release of Hyperscale service tier for single databases in Azure SQL Database, customers used to hit capacity limits for individual databases. These capacity limits still exist for pooled databases in elastic pools and instance database in managed instances. The following two sections discuss two options for solving problems with very large databases in Azure SQL Database when you cannot use the Hyperscale service tier.

Cross-database sharding

Because Azure SQL Database runs on commodity hardware, the capacity limits for an individual database are lower than for a traditional on-premises SQL Server installation. Some customers use sharding techniques to spread database operations over multiple databases when the operations don't fit inside the limits of an individual database in Azure SQL Database. Most customers who use sharding techniques in Azure SQL Database split their data on a single dimension across multiple databases. For this approach, you need to understand that OLTP applications often perform transactions that apply to only one row or to a small group of rows in the schema.

Note

SQL Database now provides a library to assist with sharding. For more information, see Elastic Database client library overview.

For example, if a database has customer name, order, and order details (like the traditional example Northwind database that ships with SQL Server), you could split this data into multiple databases by grouping a customer with the related order and order detail information. You can guarantee that the customer's data stays in an individual database. The application would split different customers across databases, effectively spreading the load across multiple databases. With sharding, customers not only can avoid the maximum database size limit, but Azure SQL Database also can process workloads that are significantly larger than the limits of the different compute sizes, as long as each individual database fits into its DTU.

Although database sharding doesn't reduce the aggregate resource capacity for a solution, it's highly effective at supporting very large solutions that are spread over multiple databases. Each database can run at a different compute size to support very large, 'effective' databases with high resource requirements.

Functional partitioning

SQL Server users often combine many functions in an individual database. For example, if an application has logic to manage inventory for a store, that database might have logic associated with inventory, tracking purchase orders, stored procedures, and indexed or materialized views that manage end-of-month reporting. This technique makes it easier to administer the database for operations like backup, but it also requires you to size the hardware to handle the peak load across all functions of an application.

If you use a scale-out architecture in Azure SQL Database, it's a good idea to split different functions of an application into different databases. By using this technique, each application scales independently. As an application becomes busier (and the load on the database increases), the administrator can choose independent compute sizes for each function in the application. At the limit, with this architecture, an application can be larger than a single commodity machine can handle because the load is spread across multiple machines.

Batch queries

For applications that access data by using high-volume, frequent, ad hoc querying, a substantial amount of response time is spent on network communication between the application tier and the Azure SQL Database tier. Even when both the application and Azure SQL Database are in the same data center, the network latency between the two might be magnified by a large number of data access operations. To reduce the network round trips for the data access operations, consider using the option to either batch the ad hoc queries, or to compile them as stored procedures. If you batch the ad hoc queries, you can send multiple queries as one large batch in a single trip to Azure SQL Database. If you compile ad hoc queries in a stored procedure, you could achieve the same result as if you batch them. Using a stored procedure also gives you the benefit of increasing the chances of caching the query plans in Azure SQL Database so you can use the stored procedure again.

Some applications are write-intensive. Sometimes you can reduce the total IO load on a database by considering how to batch writes together. Often, this is as simple as using explicit transactions instead of auto-commit transactions in stored procedures and ad hoc batches. For an evaluation of different techniques you can use, see Batching techniques for SQL Database applications in Azure. Experiment with your own workload to find the right model for batching. Be sure to understand that a model might have slightly different transactional consistency guarantees. Finding the right workload that minimizes resource use requires finding the right combination of consistency and performance trade-offs.

Application-tier caching

Some database applications have read-heavy workloads. Caching layers might reduce the load on the database and might potentially reduce the compute size required to support a database by using Azure SQL Database. With Azure Cache for Redis, if you have a read-heavy workload, you can read the data once (or perhaps once per application-tier machine, depending on how it is configured), and then store that data outside your SQL database. This is a way to reduce database load (CPU and read IO), but there is an effect on transactional consistency because the data being read from the cache might be out of sync with the data in the database. Although in many applications some level of inconsistency is acceptable, that's not true for all workloads. You should fully understand any application requirements before you implement an application-tier caching strategy.

Next steps

- For more information about DTU-based service tiers, see DTU-based purchasing model.

- For more information about vCore-based service tiers, see vCore-based purchasing model.

- For more information about elastic pools, see What is an Azure elastic pool?

- For information about performance and elastic pools, see When to consider an elastic pool

This article provides answers to frequently asked questions for customers considering a database in the Azure SQL Database Hyperscale service tier, referred to as just Hyperscale in the remainder of this FAQ. This article describes the scenarios that Hyperscale supports and the features that are compatible with Hyperscale.

- This FAQ is intended for readers who have a brief understanding of the Hyperscale service tier and are looking to have their specific questions and concerns answered.

- This FAQ isn’t meant to be a guidebook or answer questions on how to use a Hyperscale database. For an introduction to Hyperscale, we recommend you refer to the Azure SQL Database Hyperscale documentation.

General Questions

What is a Hyperscale database

A Hyperscale database is an Azure SQL database in the Hyperscale service tier that is backed by the Hyperscale scale-out storage technology. A Hyperscale database supports up to 100 TB of data and provides high throughput and performance, as well as rapid scaling to adapt to the workload requirements. Scaling is transparent to the application – connectivity, query processing, etc. work like any other Azure SQL database.

What resource types and purchasing models support Hyperscale

The Hyperscale service tier is only available for single databases using the vCore-based purchasing model in Azure SQL Database.

How does the Hyperscale service tier differ from the General Purpose and Business Critical service tiers

The vCore-based service tiers are differentiated based on database availability and storage type, performance, and maximum size, as described in the following table.

| Resource type | General Purpose | Hyperscale | Business Critical | |

|---|---|---|---|---|

| Best for | All | Offers budget oriented balanced compute and storage options. | Most business workloads. Autoscaling storage size up to 100 TB, fast vertical and horizontal compute scaling, fast database restore. | OLTP applications with high transaction rate and low IO latency. Offers highest resilience to failures and fast failovers using multiple synchronously updated replicas. |

| Resource type | Single database / elastic pool / managed instance | Single database | Single database / elastic pool / managed instance | |

| Compute size | Single database / elastic pool * | 1 to 80 vCores | 1 to 80 vCores* | 1 to 80 vCores |

| Managed instance | 8, 16, 24, 32, 40, 64, 80 vCores | N/A | 8, 16, 24, 32, 40, 64, 80 vCores | |

| Storage type | All | Premium remote storage (per instance) | De-coupled storage with local SSD cache (per instance) | Super-fast local SSD storage (per instance) |

| Storage size | Single database / elastic pool * | 5 GB – 4 TB | Up to 100 TB | 5 GB – 4 TB |

| Managed instance | 32 GB – 8 TB | N/A | 32 GB – 4 TB | |

| IOPS | Single database | 500 IOPS per vCore with 7000 maximum IOPS | Hyperscale is a multi-tiered architecture with caching at multiple levels. Effective IOPS will depend on the workload. | 5000 IOPS with 200,000 maximum IOPS |

| Managed instance | Depends on file size | N/A | 1375 IOPS/vCore | |

| Availability | All | 1 replica, no Read Scale-out, no local cache | Multiple replicas, up to 4 Read Scale-out, partial local cache | 3 replicas, 1 Read Scale-out, zone-redundant HA, full local storage |

| Backups | All | RA-GRS, 7-35 day retention (7 days by default) | RA-GRS, 7 day retention, constant time point-in-time recovery (PITR) | RA-GRS, 7-35 day retention (7 days by default) |

* Elastic pools are not supported in the Hyperscale service tier

Who should use the Hyperscale service tier

The Hyperscale service tier is intended for customers who have large on-premises SQL Server databases and want to modernize their applications by moving to the cloud, or for customers who are already using Azure SQL Database and want to significantly expand the potential for database growth. Hyperscale is also intended for customers who seek both high performance and high scalability. With Hyperscale, you get:

- Database size up to 100 TB

- Fast database backups regardless of database size (backups are based on storage snapshots)

- Fast database restores regardless of database size (restores are from storage snapshots)

- Higher log throughput regardless of database size and the number of vCores

- Read Scale-out using one or more read-only replicas, used for read offloading and as hot standbys.

- Rapid scale up of compute, in constant time, to be more powerful to accommodate the heavy workload and then scale down, in constant time. This is similar to scaling up and down between a P6 and a P11, for example, but much faster as this is not a size of data operation.

What regions currently support Hyperscale

The Hyperscale service tier is currently available in the regions listed under Azure SQL Database Hyperscale Overview.

Can I create multiple Hyperscale databases per logical server

Yes. For more information and limits on the number of Hyperscale databases per logical server, see SQL Database resource limits for single and pooled databases on a logical server.

What are the performance characteristics of a Hyperscale database

The Hyperscale architecture provides high performance and throughput while supporting large database sizes.

Ultra 300 will not auto tune free. Connecting your Ultra3000 drive as described in the Ultra3000 Digital Servo Drives Installation Manual, publication 2098-IN003. For installation information regarding equipment and accessories not included here, refer to Additional Resources on page 8 for the information available for. Sep 11, 2013 2) Direction check - The motor is given a small current to run forward - check that the feedback is also running forward load test 3) Last check is to perform an auto tune - if a replacement motor then this may not be needed I have seen a motor rotate when enabled because the. Ultra 3000 Digital Servo Drives. Our Bulletin 2098 Ultra™ 3000 Digital Servo Drives support simple standalone indexing as well as multi-axis integrated motion. All power sizes are available with indexing, SERCOS interface and DeviceNet™ networking options.

What is the scalability of a Hyperscale database

Hyperscale provides rapid scalability based on your workload demand.

- Scaling Up/DownWith Hyperscale, you can scale up the primary compute size in terms of resources like CPU and memory, and then scale down, in constant time. Because the storage is shared, scaling up and scaling down is not a size of data operation.

- Scaling In/OutYou can buy a decent house and make it perfect by adding some style and fixing stuff. The ultimate goal of the house flipping business is profit. Are you a risk taker? Cooking games for free.With Hyperscale, you also get the ability to provision one or more additional compute replicas that you can use to serve your read requests. This means that you can use these additional compute replicas as read-only replicas to offload your read workload from the primary compute. In addition to read-only, these replicas also serve as hot-standbys in case of a failover from the primary.Provisioning of each of these additional compute replicas can be done in constant time and is an online operation. You can connect to these additional read-only compute replicas by setting the

ApplicationIntentargument on your connection string toReadOnly. Any connections with theReadOnlyapplication intent are automatically routed to one of the additional read-only compute replicas.

Deep Dive Questions

Can I mix Hyperscale and single databases in a single logical server

Yes, you can.

Does Hyperscale require my application programming model to change

No, your application programming model stays as is. You use your connection string as usual and the other regular ways to interact with your Hyperscale database.

What transaction isolation level is the default in a Hyperscale database

On the primary replica, the default transaction isolation level is RCSI (Read Committed Snapshot Isolation). On the Read Scale-out secondary replicas, the default isolation level is Snapshot.

Can I bring my on-premises or IaaS SQL Server license to Hyperscale

Yes, Azure Hybrid Benefit is available for Hyperscale. Every SQL Server Standard core can map to 1 Hyperscale vCores. Every SQL Server Enterprise core can map to 4 Hyperscale vCores. You don’t need a SQL license for secondary replicas. The Azure Hybrid Benefit price will be automatically applied to Read Scale-out (secondary) replicas.

What kind of workloads is Hyperscale designed for

Hyperscale supports all SQL Server workloads, but it is primarily optimized for OLTP. You can bring Hybrid (HTAP) and Analytical (data mart) workloads as well.

How can I choose between Azure SQL Data Warehouse and Azure SQL Database Hyperscale

If you are currently running interactive analytics queries using SQL Server as a data warehouse, Hyperscale is a great option because you can host small and mid-size data warehouses (such as a few TB up to 100 TB) at a lower cost, and you can migrate your SQL Server data warehouse workloads to Hyperscale with minimal T-SQL code changes.

If you are running data analytics on a large scale with complex queries and sustained ingestion rates higher than 100 MB/s, or using Parallel Data Warehouse (PDW), Teradata, or other Massively Parallel Processing (MPP) data warehouses, SQL Data Warehouse may be the best choice.

Hyperscale Compute Questions

Can I pause my compute at any time

Not at this time, however you can scale your compute and number of replicas down to reduce cost during non-peak times.

Can I provision a compute replica with extra RAM for my memory-intensive workload

No. To get more RAM, you need to upgrade to a higher compute size. For more information, see Hyperscale storage and compute sizes.

Can I provision multiple compute replicas of different sizes

No.

How many Read Scale-out replicas are supported

The Hyperscale databases are created with one Read Scale-out replica (two replicas including primary) by default. You can scale the number of read-only replicas between 0 and 4 using Azure portal or REST API.

For high availability, do I need to provision additional compute replicas

In Hyperscale databases, data resiliency is provided at the storage level. You only need one replica to provide resiliency. When the compute replica is down, a new replica is created automatically with no data loss.

However, if there’s only one replica, it may take some time to build the local cache in the new replica after failover. During the cache rebuild phase, the database fetches data directly from the page servers, resulting in higher storage latency and degraded query performance.

For mission-critical apps that require high availability with minimal failover impact, you should provision at least 2 compute replicas including the primary compute replica. This is the default configuration. That way there is a hot-standby replica available that serves as a failover target.

Data Size and Storage Questions

What is the maximum database size supported with Hyperscale

100 TB.

What is the size of the transaction log with Hyperscale

The transaction log with Hyperscale is practically infinite. You do not need to worry about running out of log space on a system that has a high log throughput. However, log generation rate might be throttled for continuous aggressively writing workloads. The peak sustained log generation rate is 100 MB/s.

Does my tempdb scale as my database grows

Your

tempdb database is located on local SSD storage and is sized proportionally to the compute size that you provision. Your tempdb is optimized to provide maximum performance benefits. tempdb size is not configurable and is managed for you.Does my database size automatically grow, or do I have to manage the size of data files

Your database size automatically grows as you insert/ingest more data.

What is the smallest database size that Hyperscale supports or starts with

40 GB. A Hyperscale database is created with a starting size of 10 GB. Then, it starts growing by 10 GB every 10 minutes, until it reaches the size of 40 GB. Each of these 10 GB chucks is allocated in a different page server in order to provide more IOPS and higher I/O parallelism. Because of this optimization, even if you choose initial database size smaller than 40 GB, the database will grow to at least 40 GB automatically.

In what increments does my database size grow

Each data file grows by 10 GB. Multiple data files may grow at the same time.

Is the storage in Hyperscale local or remote

In Hyperscale, data files are stored in Azure standard storage. Data is fully cached on local SSD storage, on page servers that are close to the compute replicas. In addition, compute replicas have data caches on local SSD and in memory, to reduce the frequency of fetching data from remote page servers.

Can I manage or define files or filegroups with Hyperscale

No. Data files are added automatically. The common reasons for creating additional filegroups do not apply in the Hyperscale storage architecture.

Can I provision a hard cap on the data growth for my database

No.

How are data files laid out with Hyperscale

The data files are controlled by page servers, with one page server per data file. As the data size grows, data files and associated page servers are added.

Is database shrink supported

No.

Is data compression supported

Yes, including row, page, and columnstore compression.

If I have a huge table, does my table data get spread out across multiple data files

Yes. The data pages associated with a given table can end up in multiple data files, which are all part of the same filegroup. SQL Server uses proportional fill strategy to distribute data over data files.

Data Migration Questions

Can I move my existing Azure SQL databases to the Hyperscale service tier

Yes. You can move your existing Azure SQL databases to Hyperscale. This is a one-way migration. You can’t move databases from Hyperscale to another service tier. For proofs of concept (POCs), we recommend you make a copy of your database and migrate the copy to Hyperscale.

Can I move my Hyperscale databases to other service tiers

No. At this time, you can’t move a Hyperscale database to another service tier.

Do I lose any functionality or capabilities after migration to the Hyperscale service tier

Yes. Some of Azure SQL Database features are not supported in Hyperscale yet, including but not limited to long term backup retention. After you migrate your databases to Hyperscale, those features stop working. We expect these limitations to be temporary.

Can I move my on-premises SQL Server database, or my SQL Server database in a cloud virtual machine to Hyperscale

Yes. You can use all existing migration technologies to migrate to Hyperscale, including transactional replication, and any other data movement technologies (Bulk Copy, Azure Data Factory, Azure Databricks, SSIS). See also the Azure Database Migration Service, which supports many migration scenarios.

What is my downtime during migration from an on-premises or virtual machine environment to Hyperscale, and how can I minimize it

Downtime for migration to Hyperscale is the same as the downtime when you migrate your databases to other Azure SQL Database service tiers. You can use transactional replication to minimize downtime migration for databases up to few TB in size. For very large databases (10+ TB), you can consider to migrate data using ADF, Spark, or other data movement technologies. Download korg m1 vst fl studio.

How much time would it take to bring in X amount of data to Hyperscale

Hyperscale is capable of consuming 100 MB/s of new/changed data, but the time needed to move data into Azure SQL databases is also affected by available network throughput, source read speed and the target database service level objective.

Can I read data from blob storage and do fast load (like Polybase in SQL Data Warehouse)

You can have a client application read data from Azure Storage and load data load into a Hyperscale database (just like you can with any other Azure SQL database). Polybase is currently not supported in Azure SQL Database. As an alternative to provide fast load, you can use Azure Data Factory, or use a Spark job in Azure Databricks with the Spark connector for SQL. The Spark connector to SQL supports bulk insert.

It is also possible to bulk read data from Azure Blob store using BULK INSERT or OPENROWSET: Examples of Bulk Access to Data in Azure Blob Storage.

Simple recovery or bulk logging model is not supported in Hyperscale. Full recovery model is required to provide high availability and point-in-time recovery. However, Hyperscale log architecture provides better data ingest rate compared to other Azure SQL Database service tiers.

Does Hyperscale allow provisioning multiple nodes for parallel ingesting of large amounts of data

No. Hyperscale is a symmetric multi-processing (SMP) architecture and is not a massively parallel processing (MPP) or a multi-master architecture. You can only create multiple replicas to scale out read-only workloads.

What is the oldest SQL Server version supported for migration to Hyperscale

SQL Server 2005. For more information, see Migrate to a single database or a pooled database. For compatibility issues, see Resolving database migration compatibility issues.

Does Hyperscale support migration from other data sources such as Amazon Aurora, MySQL, PostgreSQL, Oracle, DB2, and other database platforms

Yes. Azure Database Migration Service supports many migration scenarios.

Business Continuity and Disaster Recovery Questions

What SLAs are provided for a Hyperscale database

See SLA for Azure SQL Database. Additional secondary compute replicas increase availability, up to 99.99% for a database with two or more secondary compute replicas.

Are the database backups managed for me by the Azure SQL Database service

Yes.

How often are the database backups taken

There are no traditional full, differential, and log backups for Hyperscale databases. Instead, there are regular storage snapshots of data files. Log that is generated is simply retained as-is for the configured retention period, allowing restore to any point in time within the retention period.

Does Hyperscale support point in time restore

Yes.

What is the Recovery Point Objective (RPO)/Recovery Time Objective (RTO) for database restore in Hyperscale

The RPO is 0 min. The RTO goal is less than 10 minutes, regardless of database size.

Sql Server Database Performance Tuning

Does database backup affect compute performance on my primary or secondary replicas

No. Backups are managed by the storage subsystem, and leverage storage snapshots. They do not impact user workloads.

Can I perform geo-restore with a Hyperscale database

Yes. Geo-restore is fully supported. Unlike point-in-time restore, geo-restore requires a size-of-data operation. Data files are copied in parallel, so the duration of this operation depends primarily on the size of the largest file in the database, rather than on total database size. Geo-restore time will be significantly shorter if the database is restored in the Azure region that is paired with the region of the source database.

Can I set up geo-replication with Hyperscale database

Not at this time.

Can I take a Hyperscale database backup and restore it to my on-premises server, or on SQL Server in a VM

No. The storage format for Hyperscale databases is different from any released version of SQL Server, and you don’t control backups or have access to them. To take your data out of a Hyperscale database, you can extract data using any data movement technologies, i.e. Azure Data Factory, Azure Databricks, SSIS, etc.

Cross-Feature Questions

Do I lose any functionality or capabilities after migration to the Hyperscale service tier

Yes. Some of Azure SQL Database features are not supported in Hyperscale, including but not limited to long term backup retention. After you migrate your databases to Hyperscale, those features stop working.

Will Polybase work with Hyperscale

No. Polybase is not supported in Azure SQL Database.

Does Hyperscale have support for R and Python

Not at this time.

Are compute nodes containerized

No. Hyperscale processes run on a Service Fabric nodes (VMs), not in containers.

Performance Questions

How much write throughput can I push in a Hyperscale database

Sql Server Automatic Tuning

Transaction log throughput cap is set to 100 MB/s for any Hyperscale compute size. The ability to achieve this rate depends on multiple factors, including but not limited to workload type, client configuration, and having sufficient compute capacity on the primary compute replica to produce log at this rate.

How many IOPS do I get on the largest compute

IOPS and IO latency will vary depending on the workload patterns. If the data being accessed is cached on the compute replica, you will see similar IO performance as with local SSD.

Does my throughput get affected by backups

No. Compute is decoupled from the storage layer. This eliminates performance impact of backup.

Does my throughput get affected as I provision additional compute replicas

Because the storage is shared and there is no direct physical replication happening between primary and secondary compute replicas, the throughput on primary replica will not be directly affected by adding secondary replicas. However, we may throttle continuous aggressively writing workload on the primary to allow log apply on secondary replicas and page servers to catch up, to avoid poor read performance on secondary replicas.

How do I diagnose and troubleshoot performance problems in a Hyperscale database

For most performance problems, particularly the ones not rooted in storage performance, common SQL Server diagnostic and troubleshooting steps apply. For Hyperscale-specific storage diagnostics, see SQL Hyperscale performance troubleshooting diagnostics.

Scalability Questions

How long would it take to scale up and down a compute replica

Scaling compute up or down should take 5-10 minutes regardless of data size.

Is my database offline while the scaling up/down operation is in progress

No. The scaling up and down will be online.

Should I expect connection drop when the scaling operations are in progress

Scaling up or down results in existing connections being dropped when a failover happens at the end of the scaling operation. Adding secondary replicas does not result in connection drops.

Is the scaling up and down of compute replicas automatic or end-user triggered operation

End-user. Not automatic.

Does the size of my tempdb database also grow as the compute is scaled up

Yes. The

tempdb database will scale up automatically as the compute grows.Can I provision multiple primary compute replicas, such as a multi-master system, where multiple primary compute heads can drive a higher level of concurrency

No. Only the primary compute replica accepts read/write requests. Secondary compute replicas only accept read-only requests.

Read Scale-out Questions

How many secondary compute replicas can I provision

We create one secondary replica for Hyperscale databases by default. If you want to adjust the number of replicas, you can do so using Azure portal or REST API.

How do I connect to these secondary compute replicas

You can connect to these additional read-only compute replicas by setting the

ApplicationIntent argument on your connection string to ReadOnly. Any connections marked with ReadOnly are automatically routed to one of the additional read-only compute replicas.How do I validate if I have successfully connected to secondary compute replica using SSMS or other client tools?

You can execute the following T-SQL query:

SELECT DATABASEPROPERTYEX ('<database_name>', 'Updateability').The result is READ_ONLY if you are connected to a read-only secondary replica, and READ_WRITE if you are connected to the primary replica. Note that the database context must be set to the name of the Hyperscale database, not to the master database.Can I create a dedicated endpoint for a Read Scale-out replica

No. You can only connect to Read Scale-out replicas by specifying

ApplicationIntent=ReadOnly.Azure Sql Auto Tuning

Does the system do intelligent load balancing of the read workload

No. A new connection with read-only intent is redirected to an arbitrary Read Scale-out replica.

Can I scale up/down the secondary compute replicas independently of the primary replica

No. The secondary compute replica are also used as high availability failover targets, so they need to have the same configuration as the primary to provide expected performance after failover.

Do I get different tempdb sizing for my primary compute and my additional secondary compute replicas

No. Your

tempdb database is configured based on the compute size provisioning, your secondary compute replicas are the same size as the primary compute.Can I add indexes and views on my secondary compute replicas

No. Hyperscale databases have shared storage, meaning that all compute replicas see the same tables, indexes, and views. If you want additional indexes optimized for reads on secondary, you must add them on the primary.

Azure Sql Database Automatic Tuning

How much delay is there going to be between the primary and secondary compute replicas

Data latency from the time a transaction is committed on the primary to the time it is visible on a secondary depends on current log generation rate. Typical data latency is in low milliseconds.

Azure Sql Managed Instance Auto Tuning

Next Steps

Azure Sql Data Warehouse Performance Tuning

For more information about the Hyperscale service tier, see Hyperscale service tier.